Arrays — Data Structures & Algorithms for Data Scientists

Arrays — Data Structures & Algorithms for Data Scientists

How dynamic and static arrays work under the hood

As data scientists, we rarely get asked LeetCode-style questions, so the need for us to learn data structures and algorithms is less than for software engineers.

However, being able to write efficient code is a great multiplier for your data science career. Imagine you can be a data scientist who knows how to implement ML models but also understands the best practices when it comes to writing code and has an appreciation and knowledge of software engineering?

You suddenly become very valuable and almost a unicorn in the market. That’s why I have started taking a data structures and algorithms course, from which I plan to share what I have learned.

This post will be dedicated to arrays, how they work under the hood, and their different types.

Data Structures

A data structure is a convenient way to store information inside a computer. As Wikipedia defines it:

A data structure is a data organization and storage format that is usually chosen for efficient access to data. More precisely, a data structure is a collection of data values, the relationships among them, and the functions or operations that can be applied to the data.

The simplest data structure is an array, which is essentially a list of values of characters. Below is an example in Python.

arr = [1, 2, 3, 4, 5]

arr = ["e", "g", "o", "r"]

Under the hood, each element is held in random access memory (RAM) with a given memory address.

Integers take up 4 bytes (32 bits) of memory, whereas characters take up only 1 byte (8 bits). So, the object type in the array will dictate the memory size allocated to it.

Static Arrays

As data scientists mainly work with Python, we hardly ever encounter static arrays in our work. However, in languages like Java, C++, and Fortran, static arrays are the default.

When we say “static” array, we refer to an array with a fixed predefined size and type by the user, for example, in C++.

// define type and length of array

int nums[12] = {31,28,31,30,31,30,31,31,30,31,30,31};

To access an element from the array, all we have to do is pass in the index to the array,

// pass in some index i

nums[i];

This is an O(1) operation regarding time complexity, as the computer already knows where to look to get that element. The time to get the element from the index does not increase as the array get’s bigger.

To loop over an array, is an O(n) operation as the time increases as the length of the array increases.

for (int i = 0; i < 12; i++) {

cout << nums[i] << "\n";

}

If we wanted to delete from an array, this is also an O(n) operation in the worst-case scenario.

This is because if we wanted to delete the first element in an array, we would need to shift every other element once to the left. This process would increase as the array increased, so it’s an O(n) operation.

However, if we were deleting the last element, then it would be a O(1) as no shifting is involved.

delete_element(int arr[], int i, int length) {

for (int index = i; index < length - 1; index++) {

arr[index] = arr[index + 1];

}

}

Similarly, inserting a value at some given index is also an O(n) operation because it may require shifting all elements once to the right.

However, again if we were inserting the last element, then it would be a O(1) as no shifting is involved.

insert_element(int arr[], int i, int n, int& length) {

for (int index = length - 1; index >= i; index--) {

arr[index + 1] = arr[index];

}

arr[i] = n;

}

Dynamic Arrays

Dynamic arrays are the default in Python. We can freely append to arrays as much as we want without any worry if we break any allocated size.

In Python, this is easily achieved through the “append” operation for lists, which are essentially dynamic arrays.

arr = [1, 2, 3, 4]

arr.append(5)

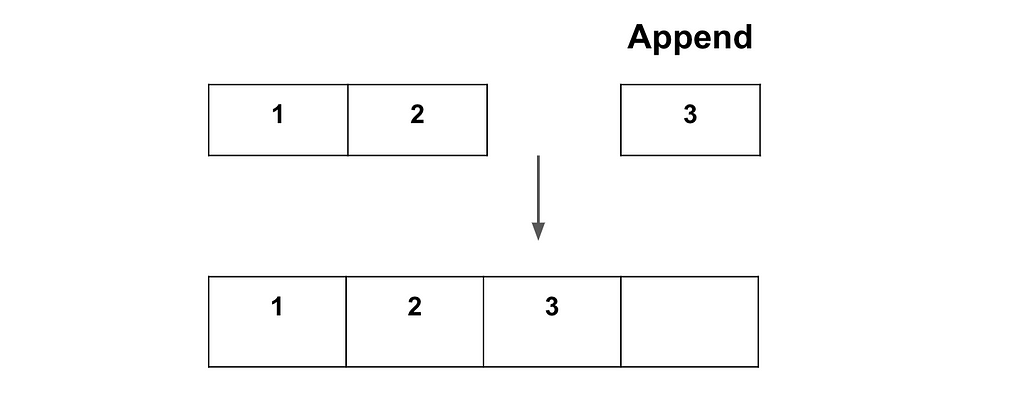

However, when adding elements to a dynamic array, it does not actually grow the array itself. What happens under the hood is that a new array (double the capacity) is created, and the original elements are copied in along with the new elements we want to add.

The diagram below illustrates this process.

After the new array is created, the old array is then deleted from memory. One can see this process is actually quite inefficient!

Adding to a dynamic array has an O(1) amortised time complexity. Amortised refers to the average complexity of an operation. If we increased the array size every time, then this would be an O(n) operation, but this is not the case on average.

The reason we double is nuanced and is answered terrifically in this quora thread. However, the main reason is to avoid the time consuming process of always resizing when wanting to add an element to the array.

Inserting or deleting from a dynamic array is the same time complexity as a static array. We would have to shift elements to the right or left, so the worst case is O(n). Likewise, accessing a dynamic array is also O(1), the same as a static array.

A quick note: With time complexity, we care about asymptotic analysis. So, an operation that is O(2n) and an operation that is O(n) are seen as the same in terms of time complexity. Any multiplier or constant is disregarded.

Stacks

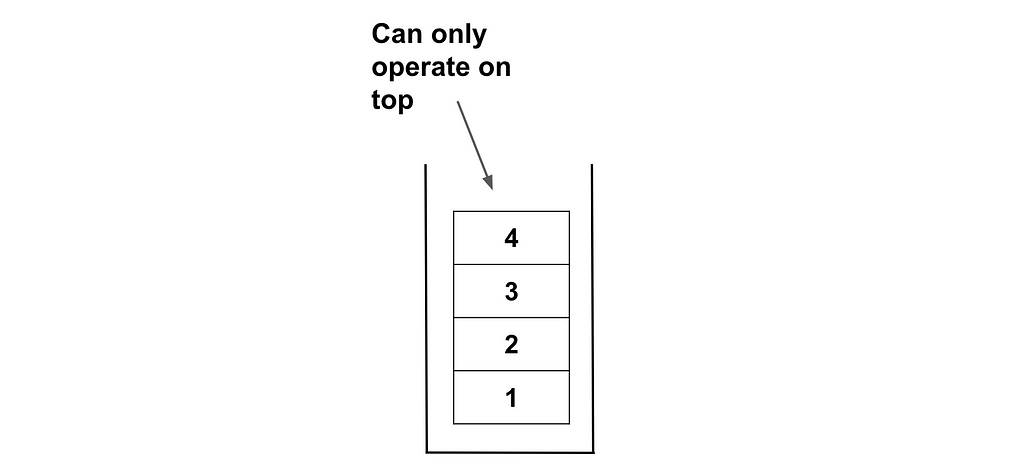

A stack is a specific dynamic array type that doesn’t exist natively in many programming languages, but you create your own stack type if need be.

In a stack, you can only add and remove the top element; you can’t perform operations on any elements in the middle of the stack. This process is called Last In, First Out (LIFO).

There are two primary operations:

- Push: Add an element to the top of the stack. In the above scenario, it will go on top of the 4.

- Pop: Remove the most recently added element. In the above scenario, it will remove the 4.

There is also the Peek operation, which shows you the last element added without removing it. In the above scenario, it will just return the value of 4.

As all these operations involve the element at the end of the array, they are all O(1) operations.

Summary & Further Thoughts

Arrays are probably the most fundamental and accessible data structure to work with, however you must use them efficiently. I hope this article gave you some insight into the different types of arrays and how their complexity of operation works.

Another Thing!

I have a free newsletter, Dishing the Data, where I share weekly tips and advice as a practising data scientist. Plus, when you subscribe, you will get my FREE data science resume and short PDF version of this AI roadmap!

Dishing The Data | Egor Howell | Substack

Connect With Me!

- LinkedIn or Instagram.

- My YouTube Channel to learn technical data science and machine learning concepts!

- 👉 Book a 1:1 mentoring call

Arrays — Data Structures & Algorithms for Data Scientists was originally published in Towards Data Science on Medium, where people are continuing the conversation by highlighting and responding to this story.

from Datascience in Towards Data Science on Medium https://ift.tt/vRBsDai

via IFTTT